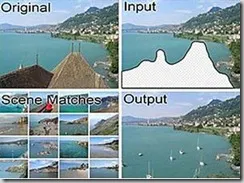

The algorithm uses a dataset of 2.3 million photographs downloaded from a community sharing Web site to find good scene matches for a given image. The pixels from these matching photos are then used to fill in the hole in a seamless and "semantically valid" way.

"It's seamless because the human eye can't detect the manipulation and semantically valid because the borrowed pixels appear in context," said Alexei Efros, a computer scientist at Canegie-Mellon University. "A motorcycle wheel and a Ferris wheel have the same basic shape, but one can't be substituted for the other when completing an image."

Unlike existing technology that requires the algorithm to go through a long learning process with constant feedback loops to improve its decision making ability, the new technique is a large-scale data-driven search engine like Google that learns to select data the easy way.

"It searches everything, all 2 million photos to find images that look similar to the given image," Efros said. How successful is it? "Images completed using the technique fooled a focus group two-thirds of the time, while the best competing technique only fooled them one-third of the time."

The researchers believe their algorithm suggests a new way of using large image collections for "brute-force" solutions to many long-standing problems in computer graphics and computer vision.

"Our chief insight is that while the space of images is effectively infinite, the photos people take are actually not that diverse," Efros said. "So for many image completion tasks, we are able to find similar scenes that contain image fragments which will convincingly complete the image."

The algorithm is entirely data-driven, requiring no annotations or labeling by the user and unlike existing image completion methods, the algorithm can generate a diverse set of image completions and allow users to select among them.

The underlying large-scale data-driven search engine for the scene completion technique has a potential application beyond correcting damaged or deficient images. It could be used by the military or law enforcement to estimate where a photograph of a terrorist or a kidnap victim was taken.

"A human expert would be better, but the algorithm could give a rough first pass and narrow down the location," Efros said. "It would help focus the available resources where they need to be."

No comments:

Post a Comment